4.4: Markov Deterioration Models

- Page ID

- 21127

Markov deterioration models are used in numerous asset management software programs. Markov models can readily accommodate the use of condition indexes with integer values and deterioration estimation for discrete-time periods such as a year or a decade. As a result, Markov models can be combined with typical condition assessment techniques and budgeting processes.

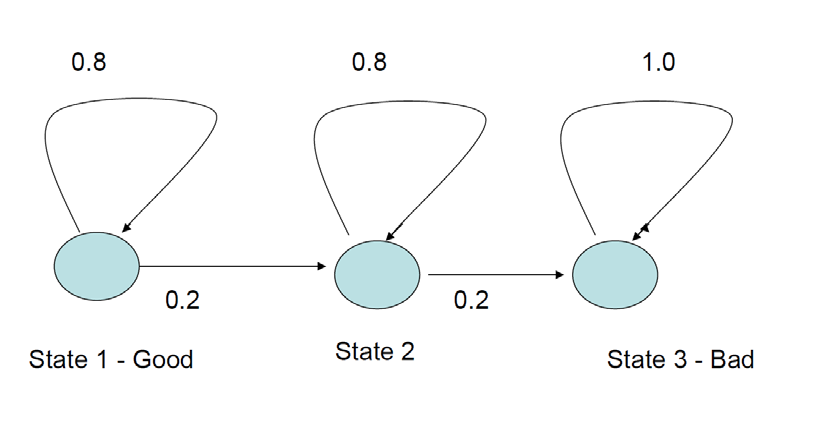

Markov models are stochastic processes with forecast probabilities of transitions among different states (\(x\), where \(x\) is a vector of multiple potential states) at particular times \((t)\) or \(x(t)\). Markov models of this type are often called ‘Markov chains’ to emphasize the transitions among states. For infrastructure component models, the states are usually assumed to be different condition indexes. If a component is in some particular condition state (\(x_i\)) then it might stay in that condition or deteriorate in the next year. Figure 4.4.1 illustrates a process with just three defined condition states (1 – good, 2 – intermediate, 3 – bad condition). If the condition at the beginning of the year is state 2 (intermediate), then the Markov process shows a 0.8 probability or 80% chance of remaining in the same condition and a 0.2 probability or 20% chance of deteriorating to state 3. If the component begins in state 3 (bad or poor condition), then there is no chance of improvement (or a 100% chance of remaining in the same state). State 3 is an ‘absorbing state’ since there is no chance of a transition out of state 3.

The Markov process in Figure 4.4.1 illustrates the pure deterioration hypothesis, in that the component cannot improve the condition over time. Beginning in state 1, the component could deteriorate to state 3 within two years and then remain permanently in state 3. More likely, the component would stay in state 1 or state 2 for a number of years, and the deterioration to state 3 would take multiple years. Note that the transition probabilities do not change over time, so the Markov model assumes that the time spent in any state does not increase the probability of deterioration (this is often called the ‘memory-less property’ of Markov models).

A Markov process may be shown in graphic form as in Figure 4.5.1 or as a table or matrix of transition probabilities. Formally, the state vector is x = (1, 2, 3) and the transition matrix P has three rows and three columns corresponding to the last three columns and bottom three rows in Table 4.1.1 (as shown in Figure 4.5.2). Note that the rows of the transition matrix must sum to 1.0 to properly represent probabilities.

| State | To: | 1 | 2 | 3 |

| From: | ||||

| 1 | 0.8 | 0.2 | 0.0 | |

| 2 | 0.0 | 0.8 | 0.2 | |

| 3 | 0.0 | 0.0 | 1.0 | |

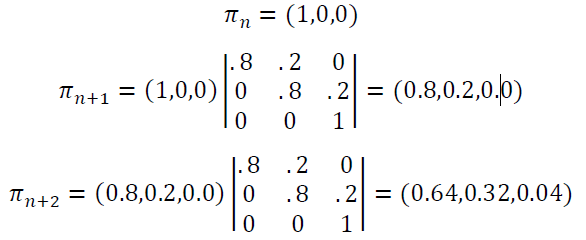

What happens if the infrastructure manager adopts the policy that components in state 3 will always be rehabilitated to state 1? In this case of intervention, there would be a transition from state 3 to state 1 with a 1.0 probability. The Forecasting conditions (or more precisely, forecasting the probability of particular states) with a Markov model involves the application of linear matrix algebra. In particular, a one-period forecast takes the existing state probabilities in period \(n\), \(\pi_n\), and multiplies the transition probability matrix:

\[\pi_{n+1}=\pi_{n} * P\]

The forecast calculation may be continued for as many periods as you like. A forecast from period \(n\) to period \(m\) would be:

\[\\pi_{n+m}=\pi_{n} * P^{m-n} \label{4.3.1}\]

Using Eq. \ref{4.3.1}, a forecast two periods from now would multiply \(\pi_n\) by \(P*P\). Figure 4.4.2 illustrates the calculations for a two-period forecast using the transition probabilities in Table 4.4.1 and assuming the initial condition is state 1 (good). While it is possible to transition from good condition to bad condition in two periods, the probability of deteriorating this quickly is only 0.04 or 4%. Most likely, the component would remain in good condition for two periods, with probability 0.64 or 64%. As a check on the calculations, note that the forecast probabilities sum to one: \(0.64 + 0.32 + 0.04 = 1.0\).

.png?revision=1)

The procedure shown in Figure 4.5.2 can be extended to find the median time until component failure. By continuing to forecast further into the future (by multiplying \(\pi\) by P repeatedly), the probability of entering the absorbing state 3 will increase. The median time until entering this state is identified when the probability reaches 0.5 or 50%. It is also possible to calculate the expected or mean time before component failure analytically. However, the median time is likely of more use in planning maintenance and rehabilitation activities for a large number of infrastructure components.

Numerous software programs can be used to perform the matrix algebra calculations illustrated in Figure 4.5.2. Two popular programs that have matrix algebra functions provided are the spreadsheet program EXCEL and the numerical analysis program MATLAB.

Where would an infrastructure manager obtain transition probability estimates such as those in Table 4.4.1? The most common approach is to create a historical record of conditions and year to year deterioration as described in Chapter 3: Condition Assessment or illustrated in Figure 4.1.3. Historical records could give the frequency of deterioration for a particular type of component and for a particular situation. Expert, subjective judgments might also be used, but these expert judgments are informed by analysis or observation of such deterioration over time.

Finally, we have presented in this section the simplest form of Markov process modelling. We have done so because this simple form seems to be useful for infrastructure management, with many Markov process applications for components such as roadways or bridges. A variety of extensions or variations are possible:

- Rather than discrete annual steps being modelling, a Markov model may use continuous time. In this case, the transition probabilities are modelled as a negative exponential probability distribution.

- If the ‘memoryless’ property of the simple Markov model seems unacceptable, you can adopt a semi-Markov assumption or even augment the state space to include both conditions and age in condition states. Unfortunately, the resulting models become more complicated and require more data for accurate estimation.

Readers wishing a broader and more mathematically rigorous presentation of Markov processes should consult a book such as Grimstead and Snell (2012) which is available for free download on the internet.